A major user-generated content platform was hit by a five-hour distributed denial-of-service assault that generated 2.45 billion malicious requests from 1.2 million unique Internet Protocol addresses, underscoring how attackers are shifting from brute-force traffic floods to carefully distributed campaigns designed to bypass conventional rate limits.

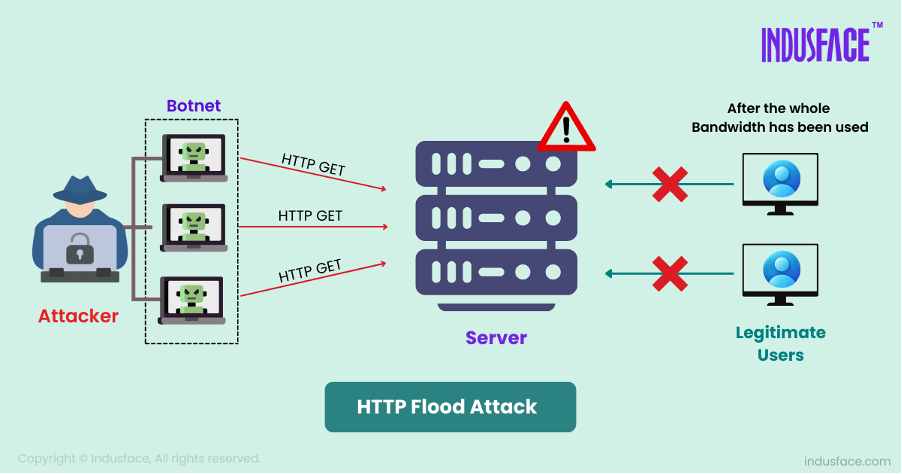

A major user-generated content platform was hit by a five-hour distributed denial-of-service assault that generated 2.45 billion malicious requests from 1.2 million unique Internet Protocol addresses, underscoring how attackers are shifting from brute-force traffic floods to carefully distributed campaigns designed to bypass conventional rate limits.The attack, intercepted by DataDome in real time, did not cause disruption for legitimate users, but its scale and structure have drawn attention across the cybersecurity industry. The operation averaged about 136,000 requests per second and reached a peak of more than 205,000 requests per second, while spreading activity across more than 16,000 autonomous systems. Each source sent traffic at a low enough rate to avoid triggering simple per-IP controls, making the campaign a high-volume “low and slow” assault rather than a classic web flood.

The target has not been publicly identified, but the description of a user-generated content platform points to the type of online service where availability, trust and moderation are central to business continuity. Such platforms rely on constant user access, advertising flows, creator engagement and search visibility, making even short outages commercially damaging.

Threat researchers found that the attackers varied the pace of their requests to reset detection thresholds and blended malicious traffic through privacy-focused networks, legitimate cloud infrastructure and widely distributed hosting providers. The method exposed a weakness in security models that judge traffic sources in isolation. When each IP address appears only mildly active, the hostile pattern becomes visible only through correlation across time, geography, network ownership and request behaviour.

The campaign also showed a middle tier of technical sophistication. The traffic attempted to appear legitimate through forged headers, cookies and URL parameters, while using basic fingerprint and transport-layer obfuscation. There was no clear evidence of advanced browser automation or high-grade JavaScript forgery, suggesting that the attack relied more on distribution, timing and infrastructure depth than on elite stealth tooling.

Cybersecurity specialists see the incident as part of a wider change in DDoS activity. High-volume attacks once focused mainly on overwhelming bandwidth or exhausting server capacity through obvious spikes. Attackers now combine application-layer pressure with botnet reach, proxy infrastructure and cloud services to mimic normal usage patterns. This makes mitigation harder for websites, mobile applications and application programming interfaces that depend on static thresholds or simple reputation lists.

The figures also reflect a broader escalation in global DDoS activity. More than eight million DDoS attacks were recorded worldwide in the second half of 2025, while large botnets and AI-assisted tooling have strengthened the ability of attackers to coordinate traffic across regions and network providers. Separate industry data has shown that large application-layer and volumetric attacks are becoming shorter, faster and more automated, requiring defence systems to make decisions within seconds.

For businesses, the lesson is that rate limiting alone is no longer sufficient. Per-IP controls remain useful against unsophisticated floods, but distributed campaigns can keep each node beneath the blocking threshold. Effective mitigation now depends on behavioural analytics, device and session intelligence, autonomous system-level correlation, anomaly detection and the ability to distinguish human users from automated traffic without adding friction for genuine visitors.

The attack also highlights the growing importance of protecting application logic, not only network pipes. Application-layer DDoS activity can target login pages, search endpoints, product listings, comment systems, content feeds or APIs, forcing platforms to spend computing resources on requests that appear plausible. Unlike raw bandwidth floods, these attacks may consume database, authentication, rendering or recommendation-system capacity while staying within normal traffic envelopes.

Cloud and proxy infrastructure played a notable role in the campaign’s ability to blend in. Legitimate providers are increasingly abused because their networks carry large volumes of trusted traffic, making blanket blocking risky. Residential and privacy networks add another layer of complexity by making malicious requests appear geographically dispersed and consumer-like. Security teams are therefore under pressure to move from binary allow-or-block rules to adaptive controls that assess intent.

The incident also carries regulatory and reputational implications. Platforms handling user-generated content must maintain availability while protecting personal data, content workflows and moderation pipelines. An outage can affect not only customer experience but also contractual service levels, advertiser confidence and public trust. Even when no breach occurs, a successful disruption can be used by criminal groups for extortion or as a distraction for parallel intrusion attempts.

DataDome’s interception of the attack without visible service interruption will be viewed by many security teams as evidence that automated, behaviour-led mitigation is becoming central to web defence. The larger concern is that the underlying model is replicable. A campaign that sends billions of requests without tripping simple rate limits can be adapted against e-commerce sites, media networks, travel platforms, financial services portals and public-sector services that depend on high availability.

The pressure on defenders is likely to intensify as botnet operators gain access to larger pools of compromised devices, rented proxies and cloud-hosted resources. Attackers do not need every request to be technically advanced when the overall traffic pattern is sufficiently distributed. That reality is forcing a reassessment of DDoS readiness, with emphasis shifting from capacity planning alone to continuous traffic intelligence, faster automated response and cross-layer visibility across infrastructure, applications and users.

Topics

Technology